Process and Thread: What Are the Differences?

Process

Process is a fundamental concept in computer science. To understand what a process is, it’s better if we learn a little bit about the history of computer systems first.

In the early 1960s, many computers, such as the IBM 7090, were based on batch-processing. Programmers use keypunch machines to prepare punch cards.

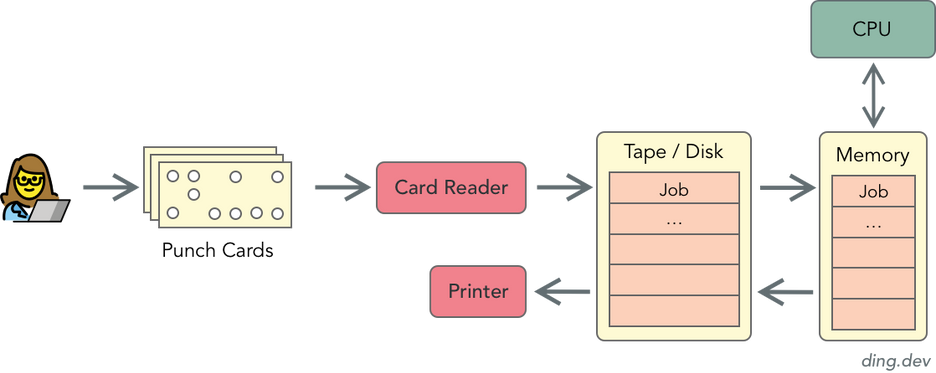

Punch cards can be read by card readers. Card readers transforms the punch cards into digital data and instructions, which will be stored in data channels supported by magnetic tape units (and later, disk).

The data and instructions will be stored in memory, and sent to the CPU for processing. After processing, the output data typically gets printed out by a printer.

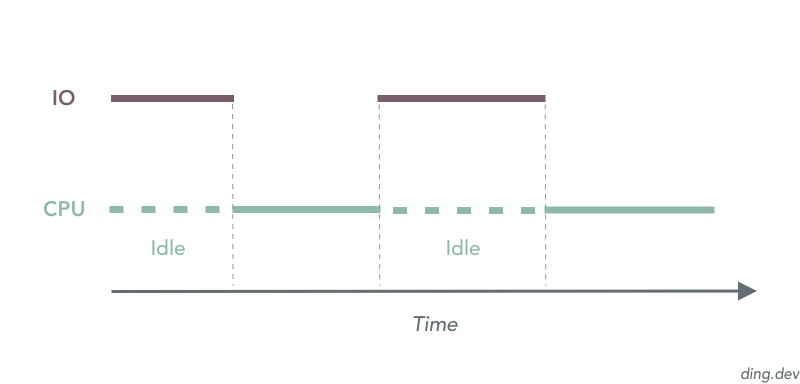

The workflow looks alright so far, but problem emerges when a program performs IO operations, for example, handling the printer. Usually, IO operations are a lot slower than CPU, especially when IO is done through cards, magnetic tapes and printers in the 1960s. Even worse, CPU has to wait before IO operation finishes.

Large IO operations result in low average CPU utilization. And low CPU utilization is not ideal, especially considering the high cost of computer systems at that time. To solve this issue, programmers created multiprogramming systems. Multiprogramming systems, like the LEO III, can load different programs into the computer memory. Programs in memory runs on a first-come, first-serve basis.

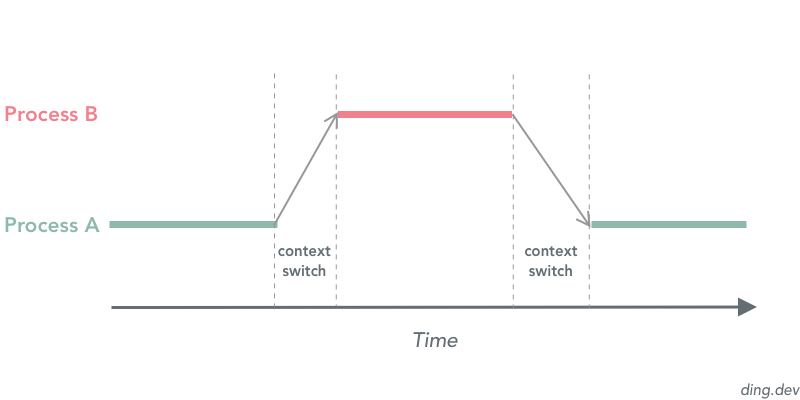

When a program A reaches an instruction waiting for an IO operation, the system stores the context of program A, and program B in memory gets picked up and executed. When the IO operation of A finishes, the system stores the context of program B, and picks up the context of program A to continue the execution.

Please note that concurrency is not parallelism. Since CPU is relatively very fast, it does a fine job quickly switching between programs, and provides the end user an illusion that programs are running at the same time. However, a uniprocessor system can only run one program at one point.

To describe a running program with its context, programmers created the term process. A new data structure Process Control Block (PCB) is also introduced by Operating Systems to help manage the processes. A PCB contains critical information on its process, such as process number and process state.

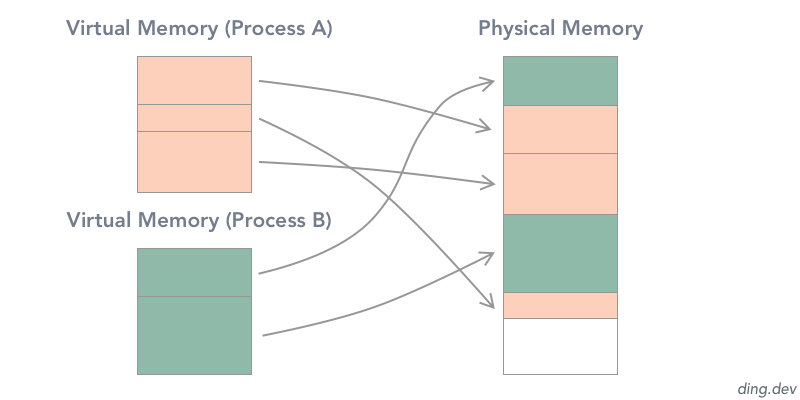

Since the memory holds the context information of processes, how do we make sure the context of multiple processes doesn’t meddle with each other? How do we isolate different processes in the physical memory space? The answer is Virtual Memory.

Virtual Memory is an abstraction of the physical memory. With Virtual Memory, the memory space of each process is isolated. Each process has the same virtual address space, which maps to actual different physical memory segments.

Thread

Thanks to multiprogramming and processes. Programmers can finally run programs concurrently, which fundamentally increased the performance of computer systems. However, as the capacity of computer systems improved, people start to think about ways to run multiple tasks concurrently within a process.

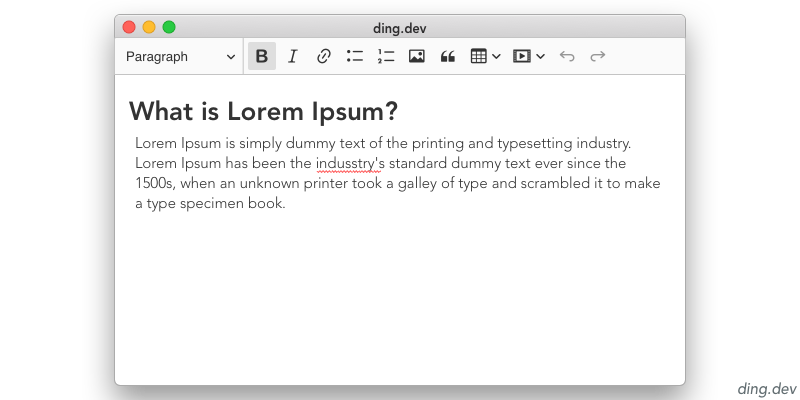

For example, if I’m working on a text editor, and would like my editor to have a spell-check feature. As my text editor process can only do one thing at a time, there are two ways to implement this feature:

- Run the spell-check in a different process. But remember, processes don’t share the memory. For the spell-check process to read in the content in my text editor process, Inter-process communication (IPC) needs to be done, which involves more work.

- Run the spell-check in the same process. Since a process can only do one thing at a time, every time spell-check runs, the editor has to halts.

It would be awesome if a process can do multiple things at the same time, right? To solve this issue, IBM created System/360 in 1967, which has an implementation of taskss that allows multi-tasking within the one process. Nowadays, we call this technique Multithreading.

A process can have multiple threads, which share the same code and runs within the context of the process. In the above example, a text editor process may have two threads:

- The primary thread handles the keyboard interruption from the user and updates the content accordingly

- The other thread periodically checks the text content for spelling errors

Multithreading also involves context switching that changes the execution context, such as program counters, registers, and stack pointers. However, multithreading is typically more efficient than multiprocessing. Because multiprocessing must involve the switching of virtual memory space, while multithreading doesn't.

Multithreading played a critical role in web servers. As web servers are usually IO-bound programs with many users, multithreading can help lower the response time of the servers by bringing concurrency to the program. Although multithreading are already efficient than multitprocessing, programmers still actively seek for better approaches to handle IO-bound programs. Modern programming languages implements another technique called Coroutine which further more squeezes the computing resources. I will talk about it in another article.

Summary

So we've talked about why Process and Thread are created. Let's sum up their major differences.

- A process is a running program with its context information. A process typically consist ore more threads that execute instructions concurrently.

- Processes run in isolated virtual memory space, while threads of the same process share the virtual memory space of that process.

- As processes run in isolated virtual memory space. It's relatively more complicated to share data between different processes than different threads. To share data between processes, programmers have to implement inter-process communication (IPC).

- Both multiprocessing and multithreading involves context switching. However, context switching between multiple threads doesn't involve that switch of virtual memory space, making multithreading typically more efficient.

Hopefully, this resource helped you understand the differences between Process and Thread. If you have any additional insights that can improve this article, please don't hesitate to let me know.